Navigating the Maze of AI Black Boxes: Understanding the Risks and Pathways to Transparency

In the realm of artificial intelligence (AI), the term "black box" refers to AI systems that operate without providing transparent explanations for their decisions. This lack of transparency can pose significant challenges, particularly in situations where understanding the reasoning behind AI-driven decisions is crucial for making informed choices or ensuring fairness and accountability.

Imagine you're learning a new skill, like playing a musical instrument. You practice diligently, following instructions and memorizing patterns. However, you don't fully comprehend the intricate workings of your brain as it processes the music, coordinates your movements, and develops the necessary muscle memory. This is akin to dealing with a black box in artificial intelligence (AI).

Just as your brain operates without fully revealing its inner workings, AI systems often make decisions without providing clear explanations. It's like having a brilliant but enigmatic teacher who guides your learning without explaining the reasoning behind their methods. While this can lead to impressive results, it also raises concerns about understanding the underlying principles and potential biases that may influence the AI's decisions.

Understanding the Components of AI Black Boxes

The three primary components of a machine-learning system are algorithms, training data, and models. AI developers often protect their intellectual property by concealing the model or training data, further obscuring the inner workings of these systems. black box algorithms make it difficult to comprehend the decision-making processes of AI models, raising concerns about potential biases, discrimination, and a lack of accountability.

black box AI systems pose several inherent risks, including:

- Bias and Discrimination: Without transparency into the decision-making process, it becomes difficult to detect and address potential biases embedded within the training data or algorithms, leading to discriminatory outcomes.

- Lack of Accountability: The absence of clear explanations for AI decisions can hinder accountability, making it challenging to identify and rectify erroneous or unjust outcomes.

- Security Vulnerabilities: Black box AI systems may be more susceptible to security vulnerabilities, as their inner workings are not fully understood, making it easier for malicious actors to exploit them.

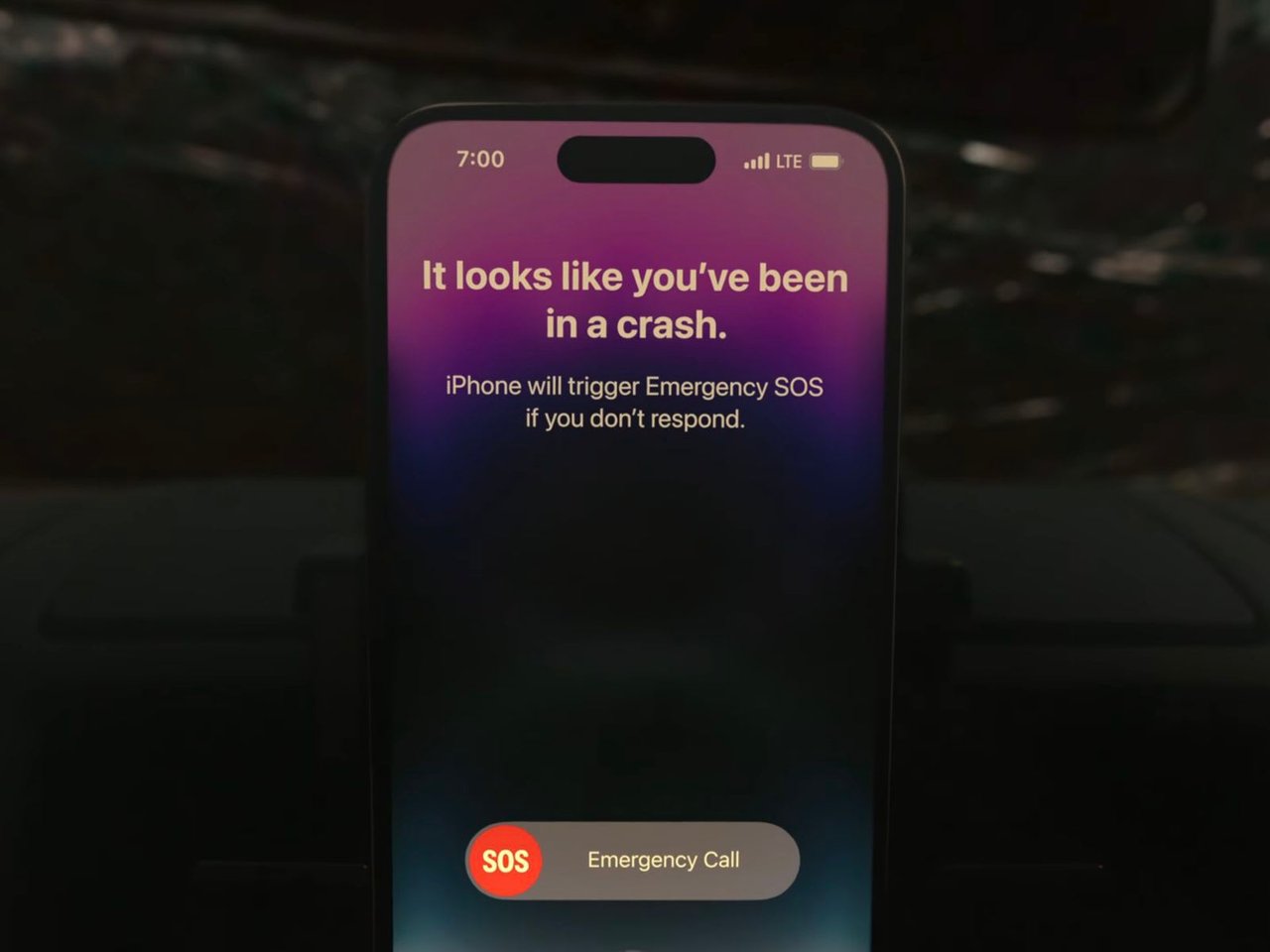

Consider a scenario where an AI system is used to diagnose medical conditions. The system might recommend a specific treatment plan, but without understanding the reasoning behind the recommendation, doctors could be hesitant to follow it. They need to know the factors the AI considered and how it arrived at its conclusion to make informed decisions about patient care.

In the financial realm, AI is used to assess creditworthiness and make loan decisions. If an AI system denies a loan application without providing clear explanations, the applicant is left in the dark, unable to understand why they were rejected and how to improve their chances of getting a loan in the future.

Even in the legal system, AI is being used to guide decisions about bail, sentencing, and parole. Without transparency, there's a risk of perpetuating biases or making unfair decisions that could have significant consequences for individuals' lives.

Mitigating the Risks of Black Box AI

To address the black box problem, researchers are exploring various techniques, including:

- Explainable AI (XAI): XAI methods aim to make AI models more transparent and interpretable, providing explanations for their decisions. This could involve breaking down complex AI models into simpler ones, using visualizations to represent the decision-making process, or generating text explanations.

- Counterfactual analysis: This technique involves systematically removing potential factors from the input data and observing how it affects the AI's decision. By understanding what factors contribute to a particular decision, we can gain insights into the AI's reasoning process.

- Human oversight: While AI can be powerful, human oversight remains crucial. Humans can review AI decisions, identify potential biases or errors, and provide guidance to ensure that AI systems are aligned with ethical principles and desired outcomes.

In contrast to black box AI, white-box AI systems are transparent, allowing users to understand how the system arrives at its conclusions. This transparency is particularly valuable in situations where clarity and comprehensibility are essential, such as in medical diagnosis or financial decision-making.

The black box problem is particularly prevalent in deep neural networks, which are complex AI models with millions of parameters and intricate architectures. To address this issue, researchers are exploring techniques such as:

- Counterfactual Analysis: By systematically removing potential factors from the input and observing the change in the decision, counterfactual analysis can provide insights into the factors driving AI decisions.

- Visualization Techniques: Visualizing the decision-making process of deep neural networks can aid in understanding their inner workings and identifying potential biases.

Conclusion

Here we have a self-created superpowered magician pulling impressive tricks out of their hat, but we're left scratching our heads, wondering how they did it. This is the black box problem in AI – we don't always understand how these systems reach their conclusions, yet we continue developing. The black box problem poses significant challenges, but ongoing research and development efforts are paving the way for more transparent and interpretable AI systems. By addressing the risks associated with black box AI and embracing XAI techniques, can we move towards a future where AI is not only powerful but also trustworthy and accountable?

Author: Stephen Miller, 11.27.23

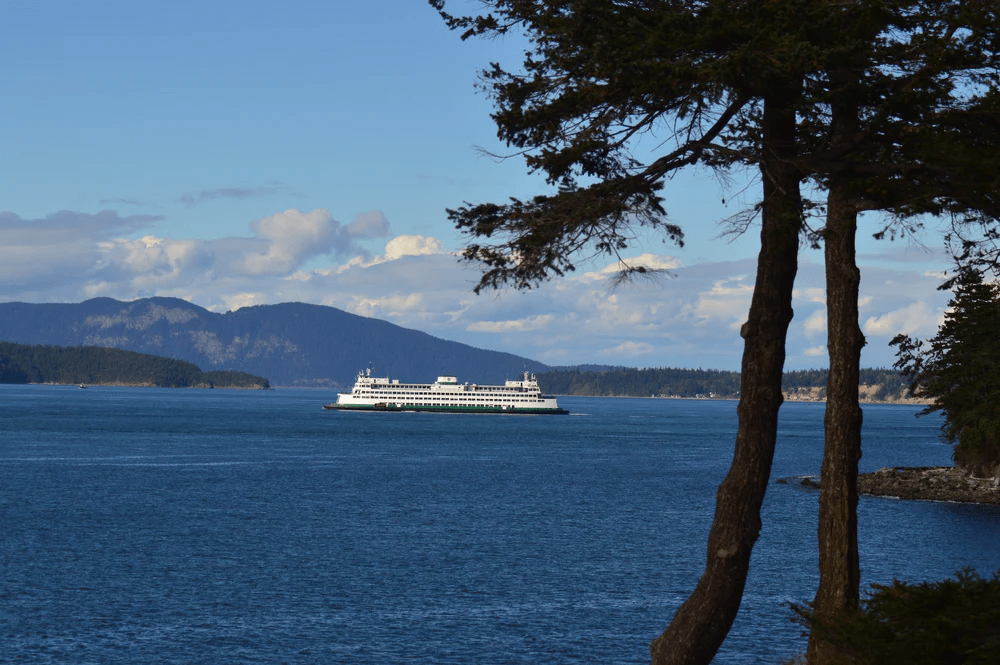

The views, information, and opinions expressed in this article are those of the author(s) and do not necessarily reflect the views of livingonwhidbey.com, its subsidiaries, or its parent companies.

Copyright © 2023

references:

Vijay, Blogof. "Unraveling the Mystery: Why Deep Learning Is Termed a black box in AI Model Complexity." Medium, Blog of Vijay, https://blogofvijay.medium.com/unraveling-the-mystery-why-deep-learning-is-termed-a-black box-in-ai-model-complexity-197832848efc

Vox. "Chat GPT: The AI Science Mystery." Unexplainable, Vox, 15 July 2023, https://www.vox.com/unexplainable/2023/7/15/23793840/chat-gpt-ai-science-mystery-unexplainable-podcast

Tensorway. "black box AI." Tensorway, Tensorway, https://www.tensorway.com/post/black box-ai

Toolify AI. "Unveiling the Mystery of the AI black box." Toolify AI News, Toolify AI, https://www.toolify.ai/ai-news/unveiling-the-mystery-of-the-ai-black box-27740

The Conversation. "What Is a black box? A Computer Scientist Explains What It Means When the Inner Workings of AIs Are Hidden." The Conversation, The Conversation, https://theconversation.com/what-is-a-black box-a-computer-scientist-explains-what-it-means-when-the-inner-workings-of-ais-are-hidden-203888

Fana, Angel. "black box AI: The Mystery Behind Artificial Intelligence." LinkedIn, Angel Fana, https://www.linkedin.com/pulse/black box-ai-mystery-behind-artificial-intelligence-angel-fana/